Recursion is a method of solving problems by breaking a problem into smaller and smaller subproblems until you get to small that it can be solved trivially. Recursion most of the times involves a function calling itself.

Recursion laws:

- A recursive algorithm must have a base case

- A recursive algorithm must change its state and move toward the base case

- A recursive algorithm must call itself, recursively

Sum of a list of numbers iteratively and recursively:

Terminal result:

45 #--------------------------------- [1, 2, 3, 4, 5, 6, 7, 8, 9] [2, 3, 4, 5, 6, 7, 8, 9] [3, 4, 5, 6, 7, 8, 9] [4, 5, 6, 7, 8, 9] [5, 6, 7, 8, 9] [6, 7, 8, 9] [7, 8, 9] [8, 9] [9] 45

nums = [0,1,2,3,4,5,6,7,8,9] def sum_list(nums): """ITERATIVE solution Return the sum of the list of numbers""" total = 0 for n in nums: total += n return total sum_list(nums) #------------------------------------------------------------------ def sum_list(nums): """RECURSIVE solution Return the sum of the list of numbers""" if len(nums) == 1: return nums[0] else: print(nums[1:]) return nums[0] + sum_list(nums[1:]) sum_list(nums)

A classic example of recursion is the implementation of the Fibonacci series:

Such that each number is the sum of the two preceding ones, starting from 0 and 1.

Terminal result:

1 : 1 2 : 1 3 : 2 4 : 3 5 : 5 6 : 8 7 : 13

def fib(n): if n == 1: return 1 elif n == 2: return 1 elif n > 2: return fib(n-1) + fib(n-2) for n in range(1, 8): print(n, ":", fib(n))

However, this is not the optimal code. If calculating for n = 100, the computations repeat per number, taking minutes to reach the Fibonacci number for n = 100.

One way to make this simple function better is to add memoization. In other words, a way for the function to not repeat computation.

Terminal result:

1 : 1 2 : 1 3 : 2 4 : 3 5 : 5 6 : 8 7 : 13 . . . 100 : 354224848179261915075

fib_cache = {}

def fib(n):

if n in fib_cache:

return fib_cache[n]

if n == 1:

value = 1

elif n == 2:

value = 1

elif n > 2:

value = fib(n-1) + fib(n-2)

fib_cache[n] = value

return value

for n in range(1, 101):

print(n, ":", fib(n))

Search refers to find an item in a collection of objects.

This is the simplest search, which checks all the items from the collection to the search item in an unordered list. If the collection of item is sorted then in case the search item is not in place, the search algorithm can stop searching.

- The Time required is O(n), since we need to pass all elements from the list.

- The Space required is O(1), since we are only comparing onw value at a time.

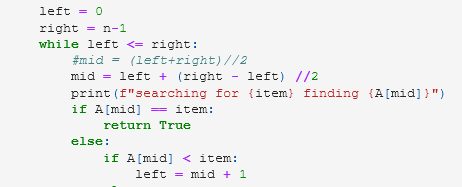

Binary search divide the collection of elements by half at every iteration.

| Search | Best case | Avg case | Worst case |

| Linear search (unordered list) | |||

| Item is present in the list | O(1) | O(n/2) | O(n) |

| Item is not present in the list | O(1) | O(n) | O(n) |

| Linear search (ordered list) | |||

| Item is present in the list | O(1) | O(n/2) | O(n) |

| Item is not present in the list | O(1) | O(n/2) | O(n) |

| Binary search (ordered list) | |||

| O(log n) | O(log n) | O(log n) |

Hash table is a collection of items which are stored in such a way to make it easy to find them later.

The mapping between an item and the slot where that item belongs in the hash table is called the hash function

If we have a hash table with 11 slots:

[None, None, None, None, None, None, None, None, None, None, None] 0 1 2 3 4 5 6 7 8 9 10

A simple hash function is the remainder method, which simply takes an item and divides it by the table size.

We can insert the six elements (54, 26, 93, 17, 77, 31) using the remainder method slot = item % hash_table

Six of the slots are now occupied meaning a load factor λ of 6/11

| Item | Hash Value |

| 54 | 10 |

| 26 | 4 |

| 93 | 5 |

| 17 | 6 |

| 77 | 0 |

| 31 | 9 |

[77, None, None, None, 26, 93, 17, None, None, 31, 54]

Now when we want to search for an item, we simply use the hash function to compute the slot position in the hash table. This searching operation is only O(1), since a constant amount of time is required to compute the hash value and then index the hash table.

However, this only works if the slot saves a single item. If two or more items need to be in the same slot, then we have a collision.

In the ideal case we want a perfect hash function mapping every element to a single slot. However, this is not possible for any arbitrary collection of items. Still, the search performance can be increased considerably.

The objective for a hash function is to minimize the number of collision, is eas to compute and evenly distributes the items in the hash table.

Other hash functions are: The folding method which divides the item into equal-size pieces. The pieces are then added together and then divided by the total number of slots.

Another numerical technique for constructing a hash function is the mid-square method. The items as squared and then some portion of the digits are removed. The resulting number is then divided by the number of slot in the table.

| Item | Remainder | Mid-Square |

| 54 | 10 | 3 |

| 26 | 4 | 7 |

| 93 | 5 | 9 |

| 17 | 6 | 8 |

| 77 | 0 | 4 |

| 31 | 9 | 6 |

For character-based hash function the ordinal values with weighting is a common practice. The word "dog" points to the ord('d') + ord('o')**2 + ord('g')**3 % number of slots.

The collision resolution is a systematic method for placing an item in a slot already occupied.

One method is to look into the hash table and try to accomodate the item that is causing the collision in another open slot. This method of linear probing uses open addressing resolution process, which moves the item through the slots until it finds an empty one. The process can result in a cycle, where the search goes back to the original hash value slot.

If we want to add elements 44, 55 and 20 to the previous table. We noticed that 44 should go to s = 0 but it is occupied so we move it one more until it finds an empty slot in the s = 1, in the same wasy 55 is stored in s = 2 and item 20 should go in the slot number 9 but it is occupied and it finds an empty slot until s = 3.

[77, 44, 55, 20, 26, 93, 17, None, None, 31, 54] 0 1 2 3 4 5 6 7 8 9 10

For preforming a search works in the same way. We need to use open addressing and linear probing and not only return True if the item is in the right slot or false if it is not. This technique tends to create clusters as noticed. In order to minimize the clustering, the process is not sequential one after another. Instead, we can skip slots for reducing potential clusters, "plus 3" will skip 3 slots when searching for an empty slot to save the item. This is know as rehashing (the process of looking for another slot after a collision).

It is important to note that the size of the 'skip' must be such that all the slots in the table will eventually be visited. To ensure this, the table size is a prime number.

| Search | Best case | Avg case | Worst case |

| Linear search (unordered list) | |||

| Item is present in the list | O(1) | O(n/2) | O(n) |

| Item is not present in the list | O(1) | O(n) | O(n) |

| Linear search (ordered list) | |||

| Item is present in the list | O(1) | O(n/2) | O(n) |

| Item is not present in the list | O(1) | O(n/2) | O(n) |

| Binary search (ordered list) | |||

| O(log n) | O(log n) | O(log n) |

An alternative method for handling the collision problem is to allow each slot to hold a chain of items, this method is called Chaining. When collision happen, the item is still placed in the proper slot of the hash table.

[ , None, None, None, , , , None, None, 31, 54] 0 1 2 3 4 5 6 7 8 9 10 | | | | | | 77 26 93 17 31 54 44 20 55

The map abstract data type is an unordered collection of associations between key - value pairs. They keys in a map are all unique so that there is a one-to-one relationship between key and a value.

Map() Creates a new empty map. Return an empty map collection.

put(key,val) Adds a new key-value pair to the map. If the key is already in the map, then replace the old value with the new value

get(key) Given a key, return the value stored in the map or None otherwise

del Delete the key-value pair from the map, i.e. del map[key]

len() Return the number of key-value pair stored in the map

in Return True if 'key in map' otherwise False

Sorting is the process of placing elements from a collection in a determine order. For example, a list with names can be aphabetically sorted by length. A list of schools can be sorted by number of students, average age, best grade overall, etc.

The Buble sort works as follows:

- Compare consecutive pairs of elements

- Swap elements in pair such that the smaller is first

- When reach the end of list start over again

- Stop when no more swaps have been made

- Largest unsorted element always at the end after a pass, so at most n passes

- The algorithm integrates as couple of for loops, O(n²)

Terminal result:

index A[0], 6 A[1], 5 index A[0], 5 A[2], 7 index A[0], 5 A[3], 4 index A[0], 4 A[4], 8 index A[0], 4 A[5], 3 index A[0], 3 A[6], 1 index A[0], 1 A[7], 9 index A[0], 1 A[8], 2 [1, 6, 7, 5, 8, 4, 3, 9, 2] index A[1], 6 A[2], 7 index A[1], 6 A[3], 5 index A[1], 5 A[4], 8 index A[1], 5 A[5], 4 index A[1], 4 A[6], 3 index A[1], 3 A[7], 9 index A[1], 3 A[8], 2 [1, 2, 7, 6, 8, 5, 4, 9, 3] . . . [1, 2, 3, 4, 5, 6, 7, 8, 9]

#-----------------------------

# We have list A

# with n = 9 elements

A = [6,5,7,4,8,3,1,9,2]

def bubble_sort(A):

'''Perform bubble sort and return the sorted list'''

n = len(A)

for i in range(n-1):

for j in range(i+1,n):

print(f"index A[{i}], {A[i]} A[{j}], {A[j]}")

if A[i] > A[j]:

A[i], A[j] = A[j], A[i]

print(A)

return A

print(bubble_sort(A))

The Selection sort works as follows:

- First step

- Extract minimum element

- Swap it with the element at index 0

- Subsequent steps

- In the remaining sublist, extract minimum element

- Swap it with the element at index 1

- Keep the left portion of the list sorted

- At i^th step, first i element in list are sorted

- All other elements are bigger that first i elements

- The algorithm integrates as couple of for loops or a single loop with min function O(n²)

Terminal result:

[6, 5, 7, 4, 8, 3, 1, 9, 2] ---------------------------- [1, 5, 7, 4, 8, 3, 6, 9, 2] [1, 2, 7, 4, 8, 3, 6, 9, 5] [1, 2, 3, 4, 8, 7, 6, 9, 5] [1, 2, 3, 4, 8, 7, 6, 9, 5] [1, 2, 3, 4, 5, 7, 6, 9, 8] [1, 2, 3, 4, 5, 6, 7, 9, 8] [1, 2, 3, 4, 5, 6, 7, 9, 8] [1, 2, 3, 4, 5, 6, 7, 8, 9] [1, 2, 3, 4, 5, 6, 7, 8, 9]

#-----------------------------

# We have list A

# with n = 9 elements

A = [6,5,7,4,8,3,1,9,2]

# Using min function

def selection_sort(A):

'''Perform selection sort with min() function

and return the sorted list'''

n = len(A)

for i in range(n):

min_element = min(A[i:])

min_element_index = A.index(min_element)

A[i], A[min_element_index] = A[min_element_index], A[i]

print(A)

print(A)

print("----------------------------")

selection_sort(A)